All Articles

By Alex Newman

|

07.18.25

Last month, the U.S. Supreme Court famously found that parents have a right to opt their children out of controversial sex indoctrination. Yet, the same federal court system has been claiming for decades that the Bible and prayer are unconstitutional.

By Kathy Valente

|

07.17.25

Join Public School Exit’s free, six‑part online Summer Series (Thursdays, July 17–Aug 21) for expert-led “how‑to” instruction on homeschooling, micro-schools, co-ops, biblical worldview, and more. Featuring top leaders—including Dran Reese, Alex Newman, Diane Douglas, and Israel Wayne—this series gives practical tools and community support to families ready to exit public school and educate with purpose. Register now!

By Ecce Verum

|

07.17.25

Abortion pills already account for the majority of abortions in America—and now Illinois is preparing to mandate their availability on public college campuses. HB 3709, now on Governor Pritzker’s desk, would embed abortion access at public colleges and universities statewide. We urge you to speak out before he signs this dangerous and deadly legislation into law.

By David E. Smith

|

07.16.25

The Families’ Rights and Responsibilities Act seeks to protect parents' fundamental right to direct their children’s education, healthcare, and upbringing. With growing federal overreach, this bill draws a needed line in the sand. Congress failed to act in 2023—will they get it right this time?

By Kathy Athearn

|

07.15.25

It has been 10 years since the United States Supreme Court declared in Obergefell v. Hodges that same-sex couples have the legal right to marry. More Americans are waking up to the profound effects that that ruling (and LGBT activism) have had on the country. Parents are tired of LGBT messaging being pushed on their kids...

By Thomas Hampson

|

07.14.25

After years of promises and speculation, the DOJ and FBI now say they’ve released all the Epstein files they intend to—and plan no further action. But survivors and the public still demand to know who took part in the sex trafficking. The truth must be fully revealed.

By David E. Smith

|

07.12.25

Christians who hold to historic & traditional teachings of the Bible believe that God created us from the beginning “male & female” (Gen 5:2; Mat 19:4; Mk 10:6). Biology & physiology empirically affirm that there are only two genders. Christians who hold to this theologically orthodox & scientific view believe that “gender-confirmation” surgery, hormone-blockers & cross-dressing damage humans.

By Bill Muehlenberg

|

07.11.25

There are of course entire libraries filled with books about socialism, Marxism, the Soviet Union, and so on. In my own personal library I have several hundred volumes on these topics. But this volume is one of the newest and best volumes available so far. It offers a sweeping yet detailed history of Communism covering the past 175 years or so.

By Ecce Verum

|

07.09.25

Remember the fear, the mandates, and the sudden power wielded by government officials and various institutions during COVID? While many have moved on, critical questions remain unanswered: Was the threat exaggerated? Were vaccines truly safe? How was the data manipulated? Who got rich during this "crisis"? U.S. Senators Rand Paul and Ron Johnson are working to expose hidden truths, insisting that the full story of the pandemic must still come out.

By Thomas Hampson

|

07.07.25

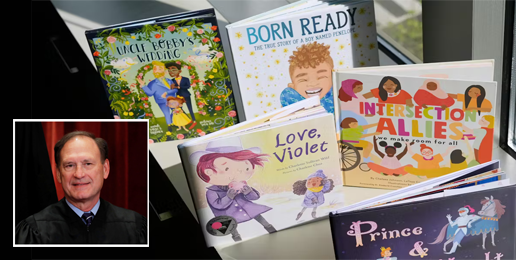

The SCOTUS ruling in Mahmoud v. Taylor is a game-changer for parental rights in public education. While the case centers on LGBTQ+ storybooks in classrooms, its implications reach far beyond reading lists. For the first time in decades, the Court has affirmed that parents have a constitutional right to shield their children from instruction that contradicts their religious beliefs—without needing to prove coercion.